Abstract

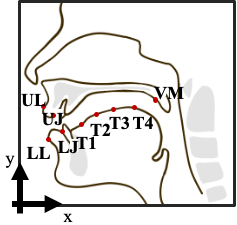

Although deep learning algorithms are widely used for improving speech enhancement (SE) performance, the performance remains limited under highly challenging conditions, such as unseen noise or noise signals having low signal-to-noise ratios (SNRs). This study provides a pilot investigation on a novel multimodal audio-articulatory-movement SE (AAMSE) model to enhance SE performance under such challenging conditions. Articulatory movement features and acoustic signals were used as inputs to waveform-mapping-based and spectral-mapping-based SE systems with three fusion strategies. In addition, an ablation study was conducted to evaluate SE performance using a limited number of articulatory movement sensors. Experimental results confirm that, by combining the modalities, the AAMSE model notably improves the SE performance in terms of speech quality and intelligibility, as compared to conventional audio-only SE baselines.